Like most experiments, this one started with a question: is it possible to monitor water quality in small streams using Unmanned Aerial Vehicles (UAVs aka “drones”)?

Traditionally, testing and monitoring water quality is a lot of work. Collecting and analyzing samples is time and labor intensive making it challenging (and often expensive) to get data for large areas. Satellite images have been used to estimate some water quality parameters (e.g. turbidity or cloudiness, chlorophyll–a levels which indicate algae, etc.), making it more practical to analyze larger areas. However, the lower spatial resolution of these images often limits their applicability for monitoring smaller water bodies (like streams) where conservation efforts are actually concentrated.

There are a number of additional challenges to remotely sensing turbidity using satellites: it’s hard to see small streams unless you buy expensive high-resolution imagery, you don’t have any control over when the satellite collects data, and most importantly, turbidity tends to be highest shortly after rain, when it is often too cloudy to see streams from above.

Send in the Drones

With UAVs and a variety of sensors becoming available, scientists now theoretically have the ability to monitor streams remotely at a higher spatial resolution, and at specific times, such as shortly after a rainstorm when there is typically more sediment in the water.

UAV-based monitoring systems offer the benefits of low-cost imaging at higher spatial resolutions and fully controlled temporal scales, and also can provide useful data on land cover and crop health in addition to water quality. So far, so good.

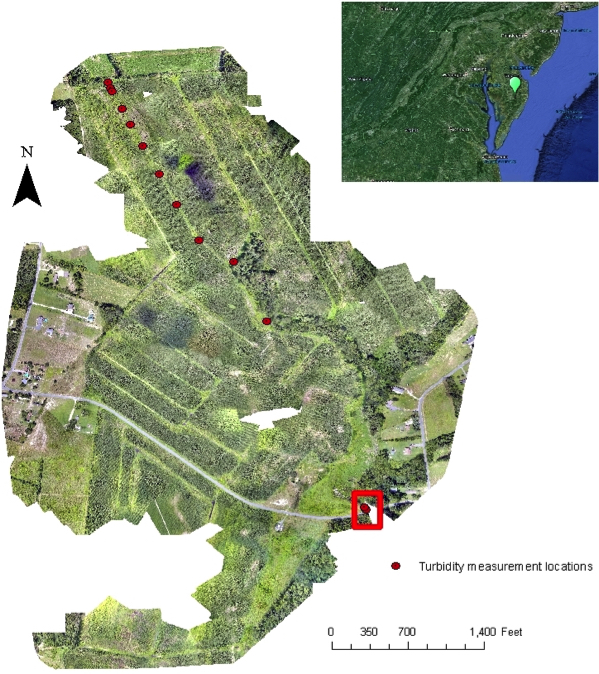

But the proof is always in the field application so I spent a day with Conservancy scientists Jon Fisher and Tim Boucher testing the efficacy of using a UAV to monitor water quality.  The laboratory for our initial foray into UAV-based field science was a stream on the Conservancy’s Taylor Farm Preserve in the Nassawango River basin in Maryland where continuous monitoring is essential for the restoration work being undertaken (Fig. 1, left).

The laboratory for our initial foray into UAV-based field science was a stream on the Conservancy’s Taylor Farm Preserve in the Nassawango River basin in Maryland where continuous monitoring is essential for the restoration work being undertaken (Fig. 1, left).

The Experiment

Our thinking: Because clean water absorbs nearly all incoming radiation, it appears black in remote sensing images. With the presence of suspended particles the amount of light reflected by the water, especially in the Visible (0.4-0.7 μm) and Near Infrared (NIR, 0.8-2 μm) wavelengths will be altered. An increase in the concentration of suspended solids causes an increase in the reflected light measured at these wavelengths.

In theory, these alterations can be recorded using a camera mounted on a UAV to generate an estimate of how much sediment is in the water.

Our experiment: In an area where the stream widens at a bridge we used an eBee Ag drone to take an image of the relatively clear water (we also collected water samples within the stream), and then kicked sediment up using the highly scientific method of stomping in the water (red box, fig. 1) before taking a second set of images and water samples.

Standing next to the stream, we could see the water looked different after we churned up the sediment, but we could not establish a clear relationship between the water turbidity and the measurements using the images taken by the UAV.

Not Quite Success, Not Quite an Epic Fail Either

While it’s possible that UAV stream monitoring might not be viable; after only one try, we’re not ready to call it a field experiment fail. There are several reasons the test might not have worked in this instance.

While the UAV provides very high-resolution data, we didn’t have a set of very precise ground control points that would have allowed us to ensure that each pixel was in exactly the right location so that we could compare data from subsequent flights.

We also learned that sediment settles fast, and we needed more accurate procedures to ensure we were sampling the water in exactly the same way every time. This was even harder since we were using two different cameras supplied separately (one to detect near-infrared light, and one for the visible spectrum) – but only one UAV – so the cameras had to be flown separately, meaning there was a delay of several minutes between the two sources.

The images of the small stream were complicated because the stream bottom was visible through the water, and by floating mats of algae on the water surface. In clear shallow water, the camera sees the channel bottom in addition to the turbidity, which makes it very difficult to differentiate between the channel bottom and the suspended matters that cause the turbidity.

Stay Tuned for Drones in the Field, Part Two: We Need More Data

When we go back for our next series of tests, we will be better prepared to select a site that is suitable to set up ground control points to improve our location accuracy, and to use a more consistent sampling plan. We also plan to conduct our next field experiment using a multispectral camera so we can get the data we need on a single flight, rather than having to fly the same area twice, and hope the stream doesn’t change.

Once we have our new set of data (for several different times and conditions), the reflectance values will be correlated to see if they can be used to measure turbidity in water. If the method works, the Conservancy’s field experts will have an additional robust tool to monitor water quality on the Taylor Farm Preserve and in projects around the world.

Essayas Ayana, Ph.D., is a Conservancy NatureNet Science Fellow at Columbia University. A pursuit of The Nature Conservancy and leading research universities, the NatureNet Science Fellows program is a trans-disciplinary postdoctoral fellowship aimed at bridging academic excellence and conservation practice to confront climate change and create a new generation of conservation leaders who marry the rigor of academic science and analysis to real-world application in the field.

good idea but have to study too much about this

Hello All!

I am pretty new to UAV application. We have already purchased the drone (phantom-4), but it only produce the imagery in JPEG and DNG . However, we are looking for geotif. This is one problem.

Another thing, we need to buy a new camera for: Is the infrared or thermal for the water quality control would be sufficient?

I do appreciate if you advise me in this case.

Thanks

Keep up the great work! We look forward to tracking your progress on this potentially cost effective means of data collection.

Eric B. Partee

Executive Director

Little Miami Conservancy

Loveland OH 45140

We’ve just completed our latest campaign. We deployed a smart turbidity sensor while flying our drone. We’ll hopefully come up with some interesting results. Stay tuned.

Hello Esssayas:

You might use your new drone and camera system at a water-treatment facility. They typically have many ponds or lagoons, at different stages of treatment and with differing levels of nutrients and algae. The facilities probably sample and analyze the ponds regularly, so you would not need to pay for analysis. It would provide a nice place to calibrate your measurements of algae concentration. It might not be a useful test site for turbidity, though.

Cheers!

– Tom Dill, Geologist

Thanks Tom, that’s is exactly a smart use of smart devices. Measuring the water parameter is quite a demanding task. Coordinating it with a water treatment facility will definitely be big relief. If the water sources is a river (instead of a waste water) we will still have the possibility to fly the drone at the entry location where turbidity will remain higher while sensing at other locations for algae. I’m not sure how easy it is going to be to get permission to fly near those facilities though.

Jon, Tim and Essayas:

It looks like the stream had a lot of its distance without a closed canopy of trees. So, related questions:

1) Was there a section with trees near the stream that led to problems flying the UAV? How well did it do avoiding taller trees and their branches? Any maneuverability problems in tight areas? It looks like a couple of your “turbidity measurement locations” might have had this challenge.

2) Maybe TNC might be trying to establish a riparian tree canopy along the stream – maybe that’s the natural condition. It appears there are robust aquatic macrophytes and algae growing in and along the stream. For example, have you considered using the UAV to monitor that vegetation?

3) Where can we find more info on the camera types, especially the multispectral version, you are referring to?

Thanks

1)There are trees near the stream where we kicked up sediment. The trees will definitely pose a problem to see ground control points for our next planned flight. We fly the drone at a higher elevation (though with in the 400 feet) so that we didn’t face a crash to the trees near the stream.

2)Given our first field campaign was a reconnaissance on the workability of the UAV system for turbidity measurement we were not trying to observe other vegetation. We used the RGB and NIR cameras which actually produce photos of high detail. But in spectral terms the multispectral camera has a red edge band (735nm) which is suitable to identify plant types, nutrition and health status, and characterize plant cover and abundance (http://www.blackbridge.com/rapideye/upload/Red_Edge_White_Paper.pdf). This camera may be the appropriate one to monitor vegetation.

3)The details on the camera types is available at https://www.sensefly.com/drones/accessories.html

Not to worry, Theresa, for this work we are flying exclusively over property owned by The Nature Conservancy, and we also got permission from the farmer we lease the land to, the FAA, and the manager of the nearest airport to ensure that we would not only not be violating the law, but also not creating ill will.

Here is a link to one of the many stories online about the gag law: http://www.usatoday.com/story/news/nation/2015/05/14/wyoming-data-trespassing/27310567/

Great idea, but probably illegal in Wyoming giving their restrictive laws about trespassing to collect data. Last year I recall reading an article about that.